Normals and the Inverse Transpose, Part 2: Dual Spaces

In the first part of this series, we learned about Grassmann algebra, and concluded that normal vectors in 3D can be interpreted as bivectors. To transform bivectors, we need to use a different matrix (in general) than the one that transforms ordinary vectors. Using a canonical basis for bivectors, we found that the matrix required is the cofactor matrix, which is proportional to the inverse transpose. This provides at least a partial explanation of why the inverse transpose is used to transform normal vectors.

However, we also left a few loose ends untied. We found out about the cofactor matrix, but we didn’t really see how that connects to the algebraic derivation that transforming a plane equation $N \cdot x + d = 0$ involves the inverse transpose. I just sort of handwaved the proportionality between the two.

Moreover, we saw that Grassmann $k$-vectors provide vectorial geometric objects with a natural interpretation as carrying units of length, area, and volume, owing to their scaling behavior. But we didn’t find anything similar for densities—units of inverse length, area, or volume.

As we’ll see in this article, there’s one more geometric concept we need to complete the picture. Putting this new concept together with the Grassmann algebra we’ve already learned will turn out to clarify and resolve these remaining issues.

Without further ado, let’s dive in!

Functions As Vectors

Most of this article will be concerned with functions taking and returning vectors of various kinds. To understand what follows, it’s necessary to make a bit of a mental flip, which you might find quite counterintuitive if you haven’t encountered it before.

The flip is this: functions that map into a vector space are themselves vectors.

That statement might not appear to make any sense at first! Vectors and functions are totally different kinds of things, right, like apples and…chairs? How can a function literally be a vector?

If you look up the technical definition of a vector space, you’ll find that it’s quite nonspecific about what the structure of a vector has to be. We often think of them as arrows with a magnitude and direction, or as ordered lists of numbers (coordinates). However, all you truly need for a vector space is a set of things that support two basic operations: being added together, and being multiplied by scalars (here, real numbers). These operations just need to obey a few reasonable axioms.

Well, functions can be added together! If we have two functions $f$ and $g$, we can add them pointwise to produce a new function $h$, defined by $h(x) = f(x) + g(x)$ for every point $x$ in the domain. Likewise, we can multiply a function pointwise by a scalar: $g(x) = a \cdot f(x)$. These operations do satisfy the vector space axioms, and therefore any set of compatible functions forms a vector space in its own right: a function space.

To put it a bit more formally: given a domain set $X$ (any kind of set, not necessarily a vector space itself) and a range vector space $V$, the set of functions $f: X \to V$ forms a vector space under pointwise addition and scalar multiplication. You need the range to be a vector space so you can add and multiply the outputs of the functions, but the domain isn’t required to be a vector space—or even a “space” per se at all; it could be a discrete set.

This realization that functions can be treated as vectors then lets us apply linear-algebra techniques to understand and work with functions—a large branch of mathematics called functional analysis.

Linear Forms and the Dual Space

From this point forward, we’ll be concerned with a specific class of functions known as linear forms.

If we have some vector space $V$ (such as 3D space $\Bbb R^3$, for instance), then a linear form on $V$ is defined as a linear function $f: V \to \Bbb R$. That is, it’s a linear function that takes a vector argument and returns a scalar.

(A note for the mathematicians: in this article I’m only talking about finite-dimensional vector spaces over $\Bbb R$, so I may occasionally make a statement that doesn’t hold for general vector spaces. Sorry!)

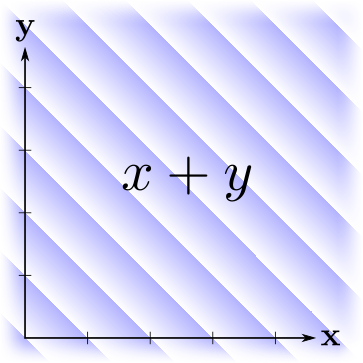

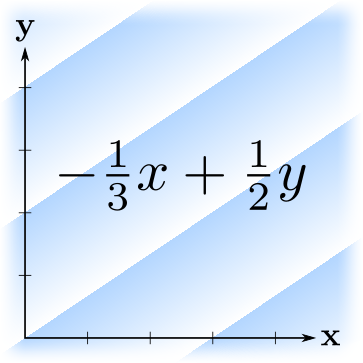

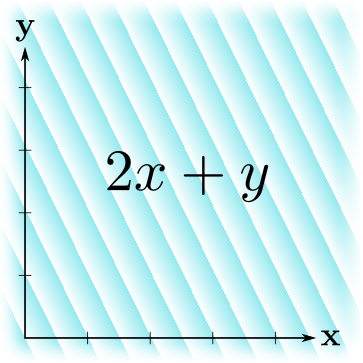

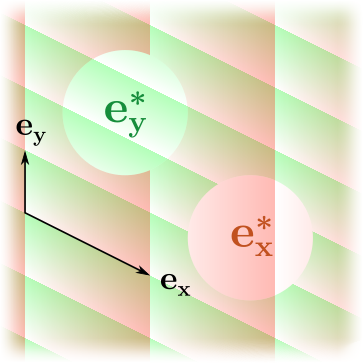

I like to visualize a linear form as a set of parallel, uniformly spaced planes (3D) or lines (2D): the level sets of the function at intervals of one unit in the output. Here are some examples:

The gradients here indicate the linear form’s orientation—the function is increasing with the gradient’s opacity; the discrete lines mark where its output crosses an integer, and the opacity wraps around to zero. Note that “bigger” linear forms (in the sense of bigger output values) have more tightly-spaced lines, and vice versa.

As elaborated in the previous section, linear forms on a given vector space can themselves be treated as vectors, in their own function space. Linear combinations of linear functions are still linear, so they do form a closed vector space in their own right.

This vector space—the set of all linear forms on $V$—is important enough that it has its own name: the dual space of $V$. It’s denoted $V^*$. The elements of the dual space (the linear forms) are then called dual vectors, or sometimes covectors.

The Natural Pairing

The fact that dual vectors are linear functions, and not general functions from $V$ to $\Bbb R$, strongly restricts their behavior. Linear forms on an $n$-dimensional vector space have only $n$ degrees of freedom, versus the infinite degrees of freedom that a general function has. To put it another way, $V^*$ has the same dimensionality as $V$.

To see this more concretely: a linear form on $\Bbb R^n$ can be fully specified by the values it returns when you evaluate it on the $n$ vectors of a basis. The result it returns for any other vector can then be derived by linearity. For example, if $f$ is a linear form on $\Bbb R^3$, and $v = (x, y, z)$ is an arbitrary vector, then: $$ \begin{aligned} f(v) &= f(x {\bf e_x} + y {\bf e_y} + z {\bf e_z}) \\ &= x \, f({\bf e_x}) + y \, f({\bf e_y}) + z \, f({\bf e_z}) \end{aligned} $$ If you’re thinking that the above looks awfully like a dot product between $(x, y, z)$ and $\bigl(f({\bf e_x}), f({\bf e_y}), f({\bf e_z}) \bigr)$—you’re right!

Indeed, the operation of evaluating a linear form has the properties of a product between the dual space and the base vector space: $V^* \times V \to \Bbb R$. This product is called the natural pairing.

Like the vector dot product, the natural pairing results in a real number, and is bilinear—linear on both sides. However, here we’re taking a product not of two vectors, but of a dual vector with a “plain” vector. The linearity on the left side comes from pointwise adding/multiplying linear forms; that on the right comes from the linear forms being, well, linear in their vector argument.

Going forward, I’ll denote the natural pairing by angle brackets, like this: $\langle w, v \rangle$. Here $w$ is a dual vector in $V^*$, and $v$ is a vector in $V$. To reiterate, this is simply evaluating the linear form $w$, as a function, on the vector $v$. But because functions are vectors, and dual vectors in particular are linear functions, this operation also has the properties of a product.

The above equation looks like this in angle-bracket notation: $$ \begin{aligned} \langle w, v \rangle &= \bigl\langle w, \, x {\bf e_x} + y {\bf e_y} + z {\bf e_z} \bigr\rangle \\ &= x \langle w, {\bf e_x} \rangle + y \langle w, {\bf e_y} \rangle + z \langle w, {\bf e_z} \rangle \end{aligned} $$ Note how this now looks like “just” an application of the distributive property—which it is!

The Dual Basis

The above construction can also be used to define a canonical basis for $V^*$, for a given basis on $V$. Namely, we want to make the numbers $\langle w, {\bf e_x} \rangle, \langle w, {\bf e_y} \rangle, \langle w, {\bf e_z} \rangle$ be the coordinates of $w$ with respect to this basis, the same way that $x, y, z$ are coordinates with respect to $V$’s basis. We can do this by defining dual basis vectors ${\bf e_x^*}, {\bf e_y^*}, {\bf e_z^*}$, according to the following constraints: $$ \begin{aligned} \langle {\bf e_x^*}, {\bf e_x} \rangle &= 1 \\ \langle {\bf e_x^*}, {\bf e_y} \rangle &= 0 \\ \langle {\bf e_x^*}, {\bf e_z} \rangle &= 0 \end{aligned} $$ and similarly for ${\bf e_y^*}, {\bf e_z^*}$. The nine total constraints can be summarized as: $$ \langle {\bf e}_i^*, {\bf e}_j \rangle = \begin{cases} 1 & \text{if } i = j, \\ 0 & \text{if } i \neq j, \end{cases} \quad i, j \in \{ {\bf x, y, z} \} $$ This dual basis always exists and is unique, given a valid basis on $V$ to start from.

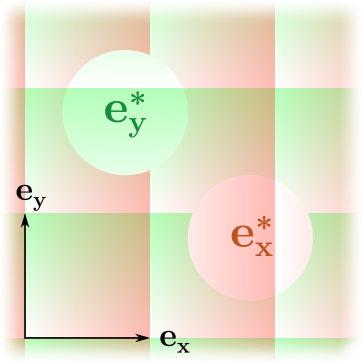

Geometrically speaking, the dual basis consists of linear forms that measure the distance along each axis—but the level sets of those linear forms are parallel to all the other axes. They’re not necessarily perpendicular to the same axis that they’re measuring, unless the basis happens to be orthonormal. This feature will be important a bit later!

By way of example, here are a couple of vector bases together with their corresponding dual bases:

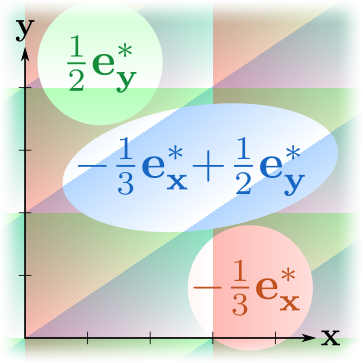

Here’s an example of a linear form decomposed into basis components, $w = p {\bf e_x^*} + q {\bf e_y^*}$:

With the dual basis defined as above, if we express both a dual vector $w$ and a vector $v$ in terms of their respective bases, then the natural pairing $\langle w, v \rangle$ boils down to just the dot product of the respective coordinates: $$ \begin{aligned} \langle w, v \rangle &= \bigl\langle p {\bf e_x^*} + q {\bf e_y^*} + r {\bf e_z^*}, \; x {\bf e_x} + y {\bf e_y} + z {\bf e_z} \bigr\rangle \\ &= px + qy + rz \end{aligned} $$

Transforming Dual Vectors

In the preceding article, we learned that although vectors and bivectors may appear structurally similar (they both have three components, in 3D space), they have different geometric meanings and different behavior when subject to transformations—in particular, to scaling.

With dual vectors, we have a third example in this class! Dual vectors are again “vectorial” objects (obeying the vector space axioms), again structurally similar to vectors and bivectors (having three components, in 3D space), but with a different geometric meaning (linear forms). This immediately suggests we look into dual vectors’ transformation behavior!

Dual vectors are linear forms, which are functions. So how do we transform a function?

The way I like to think about this is that the function’s output values are carried along with the points of its domain when they’re transformed. Imagine labeling every point in the domain with the function’s value at that point. Then apply the transformation to all the points; they move somewhere else, but carry their label along with them. (Another way of thinking about it is that you’re transforming the graph of the function, considered as a point-set in a one-higher-dimensional space.)

To formalize this a bit more: suppose we transform vectors by some matrix $M$, and we want to apply this transformation also to a function $f(v)$, yielding a new function $g(v)$. What we want is that $g$ evaluated on a transformed vector should equal $f$ evaluated on the original vector: $$ g(Mv) = f(v) $$ Or, equivalently, $$ g(v) = f(M^{-1}v) $$ In other words, we can apply a transformation to a function by making a new function that first applies the inverse transformation to its argument, then passes that to the old function.

Note that this only works if $M$ is invertible. If it isn’t, then our picture of “carrying the output values along with the domain points” falls apart: a noninvertible $M$ can collapse many distinct domain points into one, and then how could we decide what the function’s output should be at those points?

Uniform Scaling

Now that we understand how to apply a transformation to a function, let’s look at uniform scaling as an example. We’ll scale by a factor $a > 0$, so that vectors transform as $v \mapsto av$. Then functions will transform as $f(v) \mapsto f(v/a)$, per the previous section.

Let’s switch back to looking at this from a “dual vector” point of view instead of a “function” point of view. So, if $f(v) = \langle w, v \rangle$ for some dual vector $w$, then what happens when we scale by $a$? $$ \begin{aligned} \langle w, v \rangle \mapsto & \left\langle w, \frac{v}{a} \right\rangle \\ = & \left\langle \frac{w}{a}, v \right\rangle \end{aligned} $$ I’ve just moved the $1/a$ factor from one side of the angle brackets to the other, which is allowed because it’s a bilinear operation. To summarize, we’ve found that the dual vector $w$ transforms as: $$ w \mapsto \frac{w}{a} $$

Hmm, interesting! When we scale vectors by $a$, then dual vectors scale by $\bm{1/a}$. If you recall the previous article, we justified assigning units like “area” and “volume” to bivectors and trivectors on the basis of their scaling behavior. Following that line of reasoning, we can now conclude that dual vectors carry units of inverse length!

In fact, dual vectors represent oriented linear densities. They provide a quantitative way of talking about situations where some kind of scalar “stuff”—such as probability, texel count, opacity, a change in voltage/temperature/pressure, etc.—is spread out along one dimension in space. When you pair the dual vector with a vector (i.e. evaluate the linear form on a vector), you’re asking “how much of that ‘stuff’ does this vector span?”

Under a scaling, we want to preserve the amount of “stuff”. If we’re scaling up, then the density of “stuff” will need to go down, as the same amount of stuff is now spread over a longer distance; and vice versa. This property is implemented by the inverse scaling behavior of dual vectors.

Sheared Dual Vectors and the Inverse Transpose

We’ve seen how uniform scaling applies inversely to dual vectors. We could study nonuniform scaling now, too, but it turns out that axis-aligned nonuniform scaling isn’t that interesting—it just applies inversely to each axis, as you might expect. It’ll be more illuminating at this point to look at what happens with a shear.

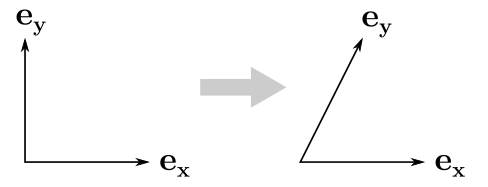

I’ll stick to 2D for this one. As an example transformation, we’ll shear the $y$ axis toward $x$ a little bit: $$ M = \begin{bmatrix} 1 & \tfrac{1}{2} \\ 0 & 1 \end{bmatrix} $$ Here’s what it looks like:

When we perform this transformation on a dual vector, what happens? When you look at it visually, it’s pretty straightforward—the level sets (isolines) of the linear form will tilt to follow the shear.

But how do we express this as a matrix acting on the dual vector’s coordinates? Let’s focus on the $\bf e_x^*$ component. Note that our transformation $M$ doesn’t affect the $x$-axis—it maps $\bf e_x$ to itself. But what about $\bf e_x^*$?

The $\bf e_x^*$ component of a dual vector does change under this transformation, because the isolines pick up the shear! Or, to put it another way: although distances along the $x$ axis (which $\bf e_x^*$ measures) don’t change here, $\bf e_x^*$ still cares about what the other axes are doing because it has to stay parallel to them. That’s one of the defining conditions for the dual basis to do its job.

In particular, we have that $\bf e_x^*$ maps to ${\bf e_x^*} - \tfrac{1}{2}{\bf e_y^*}$. If we work it out the rest of the way, the full matrix that applies to the coordinates of a dual vector is: $$ \begin{bmatrix} 1 & 0 \\ -\tfrac{1}{2} & 1 \end{bmatrix} $$ This is the inverse transpose of $M$!

We can loosely relate the effect of the inverse transpose here to that of the cofactor matrix for bivectors, as seen in the preceding article. Like a bivector, each dual basis element cares about what’s happening to the other axes (because it needs to keep parallel to them)—but it also must scale inversely along its own axis. The determinant of $M$ gives the cumulative scaling along all the axes: $$ \det M = \text{scaling on my axis} \cdot \text{scaling on other axes} $$ We can algebraically rearrange this to: $$ \frac{1}{\text{scaling on my axis}} = \frac{1}{\det M} \cdot \text{scaling on other axes} $$ This matches the relation between the inverse transpose and the cofactor matrix. $$ M^{-T} = \frac{1}{\det M} \cdot \text{cofactor}(M) $$ I’m handwaving a lot here—a detailed geometric demonstration would take us off into the weeds—but hopefully this gives at least a little bit of intuition for why the inverse transpose matrix is the right thing to use for dual vectors.

So What’s a Normal Vector, Anyway?

As we’ve seen, the level sets of a linear form are parallel lines in 2D, or planes in 3D. This implies that we can define a plane by picking out a specific level set of a given dual vector: $$ \langle w, v \rangle = d $$ The dual vector $w$ is acting as a signed distance field for the plane.

We’ve also seen that when expressed in terms of matched basis-and-dual-basis components, the natural pairing product $\langle w, v \rangle$ reduces to a dot product $w \cdot v$. And then the above equation looks like the familiar plane equation: $$ w \cdot v = d $$ This shows that the dual vector’s coordinates with respect to the dual basis are also the coordinates of a normal vector to the plane, in the standard vector basis.

So, normal vectors can be interpreted as dual vectors expressed in the dual basis, and that’s why they transform with the inverse transpose!

But wait—in the last article, didn’t I just say that normal vectors should be interpreted as bivectors, and therefore they transform with the cofactor matrix? Which one is it?

Ultimately, I don’t think there’s a definitive answer to this question! “Normal vector” as an idea is a bit too vague—bivectors and dual vectors are both defensible ways to formalize the “normal vector” concept. As we’ve seen, the way they transform is equivalent as far as orientation: bivectors and dual vectors both transform to stay perpendicular to the plane they define, by either $B \wedge v = d$ or $\langle w, v \rangle = d$, respectively. The difference between them is in the units they carry and their scaling behavior: bivectors are areas, while dual vectors are inverse lengths.

That’s all I have to say about transforming normal vectors! But we’ve got another question still dangling. At the end of Part 1, I asked about vectorial quantities with negative scaling powers. In dual vectors, we’ve now achieved scaling power −1. But what about −2 and −3? To find those, we’re going to have to combine dual spaces with Grassmann algebra. We’ll do that in the third and final part of this series.