Normals and the Inverse Transpose, Part 1: Grassmann Algebra

A mysterious fact about linear transformations is that some of them, namely nonuniform scalings and shears, make a puzzling distinction between “plain” vectors and normal vectors. When we transform “plain” vectors with a matrix, we’re required to transform the normals with—for some reason—the inverse transpose of that matrix. How are we to understand this?

It takes only a bit of algebra to show that using the inverse transpose ensures that transformed normals will remain perpendicular to the tangent planes they define. That’s fine as far as it goes, but it misses a deeper and more interesting story about the geometry behind this—which I’ll explore over the next few articles.

Units and Scaling

Before we dig into the meat of this article, though, let’s take a little apéritif. Consider plain old uniform scaling (by the same factor along all axes). It’s hard to think of a more innocuous transformation—it’s literally just multiplying all vectors by a scalar constant.

But if we look carefully, there’s already something not quite trivial going on here. Some quantities carry physical “dimensions” or “units”, like lengths, areas, and volumes. When we perform a scaling transformation, these quantities are altered in a way that corresponds to their units. Meanwhile, other quantities are “unitless” and don’t change under a scaling.

To be really explicit, let’s enumerate the possibilities for scaling behavior in 3D space. Suppose we scale by a factor $a > 0$. Then:

- Unitless numbers do not change—or in other words, they get multiplied by $a^0$.

- Lengths get multiplied by $a$.

- Areas get multiplied by $a^2$.

- Volumes get multiplied by $a^3$.

And that’s not all—there are also densities, which vary inversely with the scale factor:

- Linear densities get multiplied by $1/a$.

- Area densities get multiplied by $1/a^2$.

- Volume densities get multiplied by $1/a^3$.

Think of things like “texels per length”, or “probability per area”, or “particles per volume”. If you scale up a 3D model while keeping its textures the same size, then its texels-per-length density goes down, and so on.

So, even restricting ourselves to just uniform scaling and looking at scalar (non-vector) values, we already have a phenomenon where different quantities—which appear the same structurally, i.e. they’re all just single-component scalars—are revealed to behave differently when a transformation is applied, owing to the different units they carry. In particular, they carry different powers of length, ranging from −3 to +3. A quantity with $k$ powers of length scales as $a^k$.

(We could also invent quantities that have scaling powers of ±4 or more, or even fractional scaling powers. But I’ll leave those aside, as such things don’t have a strong geometric interpretation in 3D.)

Okay, maybe this is somehow reminiscent of the “plain vectors versus normals” thing. But how does this work for vector quantities? How do nonuniform scalings affect this picture? And where does the inverse transpose come into it? To really understand this, we’ll have to range farther into the domains of math.

Grassmann Algebra

For the rest of this series, we’re going to be making use of Grassmann algebra (also called “exterior algebra”). Since this is probably unfamiliar to many of my readers, I’ll give a pretty quick introduction to it. For more background, see this talk by Eric Lengyel, or the first few chapters of Dorst et al’s Geometric Algebra for Computer Science; there are also many other references available around the web.

Grassmann algebra extends linear algebra to operate not just on vectors, but on additional “higher-grade” geometric entities called bivectors, trivectors, and so on. These objects are collectively known as $\bm k$-vectors, where $k$ is the grade or dimensionality of the object. They obey the same mathematical rules as vectors do—they can be added together, and multiplied by scalars. However, their geometric interpretation is different.

We often think of a vector as being sort of an abstract arrow—it has both a direction in space in which the arrow points, and a magnitude, represented by the arrow’s length. A bivector is a lot like that, but planar instead of linear. Instead of an arrow, it’s an abstract chunk of a flat surface.

Like vectors, bivectors also have directions, in the sense that a planar surface can face various directions in space; and they have magnitudes, geometrically represented as the area of the surface chunk. However, what they don’t have is a notion of shape within their plane. When you picture a bivector as a piece of a plane, you’re free to imagine it as a square, a circle, a parallelogram, or any funny shape you want, as long as it has the correct area.

Similarly, trivectors are three-dimensional vectorial quantities; they represent a chunk of space, instead of a flat surface or an arrow. Again, they have no defined shape, only a magnitude—which is now a volume instead of an area or length.

In 3D space, trivectors don’t really have a direction in a useful sense—or rather, there’s only one possible direction, which is parallel to space. However, trivectors still come in two opposite orientations, which we can denote as “positive” and “negative”, or alternatively “right-handed” and “left-handed”. It’s much like how a vector can point either left or right along a 1D line, and we can label those orientations as positive and negative if we like.

In higher dimensions, trivectors could also face different directions, as vectors and bivectors do. Higher-dimensional spaces would even allow for quadvectors and higher grades. However, we’ll be sticking to 3D for this series!

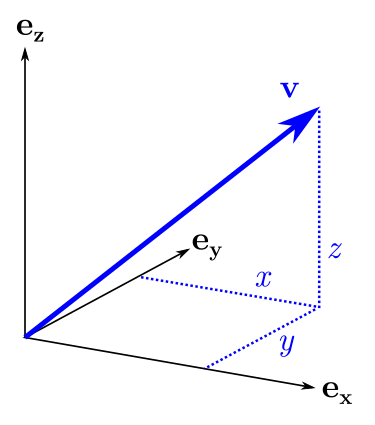

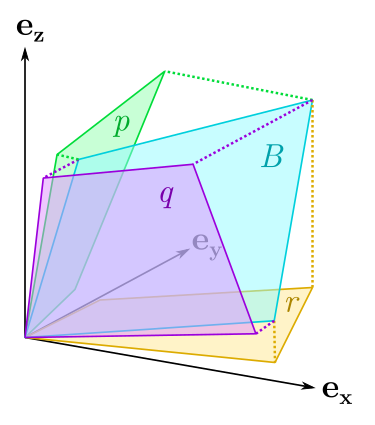

Basis $k$-Vectors

Just as you can break down a vector into components with respect to a basis, you can do the same with bivectors and trivectors. When we write a vector $v$ in terms of coordinates, $v = (x, y, z)$, what we’re really saying is that $v$ can be made up as a linear combination of basis vectors: $$ v = x \, {\bf e_x} + y \, {\bf e_y} + z \, {\bf e_z} $$ The basis vectors ${\bf e_x}, {\bf e_y}, {\bf e_z}$ can be taken as defining the direction and scale of the coordinate $x, y, z$ axes. In the same way, a bivector $B$ can be formed from a linear combination of basis bivectors: $$ B = p \, {\bf e_{yz}} + q \, {\bf e_{zx}} + r \, {\bf e_{xy}} $$ Here, ${\bf e_{xy}}$ would be a bivector of unit area oriented along the $xy$ plane, and similarly for ${\bf e_{yz}}, {\bf e_{zx}}$. The basis bivectors correspond not to individual coordinate axes, but to the planes spanned by pairs of axes. This defines “bivector coordinates” $(p, q, r)$ by which we can identify or create any other bivector in the space.

The trivector case is less interesting: $$ T = t \, {\bf e_{xyz}} $$ As mentioned before, trivectors in 3D only have one possible direction, so they have only one basis element: the unit trivector “along the $xyz$ space”, so to speak. All other trivectors are just some scalar multiple of ${\bf e_{xyz}}$.

The Wedge Product

So, Grassmann algebra contains all these vector-like entities of different grades: ordinary vectors (grade 1), bivectors (grade 2), and trivectors (grade 3). You can also think of plain old scalars as being grade 0. Finally, to allow different grades to interoperate together, Grassmann algebra defines an operation called the wedge product, or exterior product, denoted $\wedge$. This gives you the ability to create a bivector by multiplying together two vectors. For example: $$ {\bf e_x} \wedge {\bf e_y} = {\bf e_{xy}} $$ In general, you can wedge any two vectors, and the result will be a bivector lying in the plane spanned by those vectors; its magnitude will be the area of the parallelogram formed by the vectors (like the cross product).

Note, however, that the bivector doesn’t “remember” the specific two vectors it was wedged from. Any two vectors in the same plane, spanning a parallelogram of the same area (and orientation), will generate the same bivector. A bivector can also be factored back into two vectors, but not uniquely.

You can also wedge together three vectors, or a bivector with a vector, to form a trivector. $$ {\bf e_x} \wedge {\bf e_y} \wedge {\bf e_z} = {\bf e_{xy}} \wedge {\bf e_z} = {\bf e_{xyz}} $$ This turns out to be equivalent to the “scalar triple product”, producing a trivector representing the oriented volume of the parallelepiped formed by the three vectors.

The wedge product obeys most of the ordinary multiplication rules you know, such as associativity and the distributive law. Scalar multiplication commutes with wedges—for scalar $a$, we have: $$ (au) \wedge v = u \wedge (av) = a(u \wedge v) $$ However, wedging two vectors together is anticommutative (again like the cross product). For vectors $u, v$, we have: $$ u \wedge v = -(v \wedge u) $$ This has a few implications worth noting. First, any vector wedged with itself always gives zero: $v \wedge v = 0$. Furthermore, any list of linearly dependent vectors, wedged together, will give zero. For example, $u \wedge v = 0$ whenever $u$ and $v$ are collinear. In the case of three vectors, $u \wedge v \wedge w = 0$ whenever $u, v, w$ are coplanar.

This also explains why grades beyond 3 don’t exist in 3D space. The wedge product of four 3D vectors is always zero, because you can’t have four linearly independent vectors in 3D.

Transforming $k$-Vectors

Earlier, I asserted that you could think of the magnitude of a vector as a length, that of a bivector as an area, and that of a trivector as a volume. But what justifies those assignments of units to these quantities?

Earlier, we saw that lengths, areas, and volumes have distinct scaling behavior. Upon uniformly scaling 3D space by a factor $a > 0$, lengths, areas, and volumes will scale as $a, a^2, a^3$, respectively. We have the tools to see, now, that vectors, bivectors, and trivectors behave in the same way.

Scaling a vector can be done by multiplying with the appropriate matrix: $$ \begin{gathered} v \mapsto Mv \\ \begin{bmatrix} x \\ y \\ z \end{bmatrix} \mapsto \begin{bmatrix} a & 0 & 0 \\ 0 & a & 0 \\ 0 & 0 & a \end{bmatrix} \begin{bmatrix} x \\ y \\ z \end{bmatrix} = \begin{bmatrix} ax \\ ay \\ az \end{bmatrix} = av \end{gathered} $$ The vector $v$ as a whole, as well as its components $x, y, z$ and its scalar magnitude, all pick up a factor $a$ upon scaling; so we can safely call them lengths. Hopefully, this is uncontroversial!

What about bivectors? To see how they behave under scaling (or any linear transformation), we can turn to the wedge product. In 3D, any bivector can be factored as a wedge product of two vectors. We already know how to transform vectors. Therefore, we can transform a bivector by transforming its vector factors and re-wedging them: $$ \begin{aligned} B &= u \wedge v \\ (u \wedge v) &\mapsto (Mu) \wedge (Mv) \\ &= (au) \wedge (av) \\ &= a^2 (u \wedge v) \\ &= a^2 B \end{aligned} $$ Presto! Since the bivector has two vector factors, and each one scales by $a$, the bivector picks up an overall factor of $a^2$, making it an area.

Trivectors too can be transformed by factoring them into vectors. It comes as no surprise to find that their three vector factors give them an overall scaling of $a^3$. Just for completeness: $$ \begin{aligned} T &= (u \wedge v \wedge w) \\ (u \wedge v \wedge w) &\mapsto (Mu) \wedge (Mv) \wedge (Mw) \\ &= (au) \wedge (av) \wedge (aw) \\ &= a^3 (u \wedge v \wedge w) \\ &= a^3 T \end{aligned} $$

Bivectors and Nonuniform Scaling

Now, we can finally begin to address our original question. What complications come into play when we start doing nonuniform scaling?

To investigate this, let’s study an example. We’ll scale by a factor of 3 along the $x$ axis, leaving the other two axes alone. Our scaling matrix will therefore be: $$ M = \begin{bmatrix} 3 & 0 & 0 \\ 0 & 1 & 0 \\ 0 & 0 & 1 \end{bmatrix} $$ For plain old vectors, this does the obvious thing: the $x$ component gets multiplied by 3, and the $y, z$ components are unchanged. In general, this alters both the vector’s length and direction in a way that depends on its initial direction—vectors close to the $x$ axis are going to be stretched more, while vectors close to the $yz$ plane will be less affected.

What happens to a bivector when we perform this transformation? First, let’s just think about it geometrically. A bivector represents a chunk of area with a particular planar facing direction. When we stretch this out along the $x$ axis, we again expect both its direction and area to change. But different bivectors will be affected differently: a bivector close to the $yz$ plane will again be less affected by the scaling, while bivectors whose planes have a significant component along the $x$ axis will be stretched more.

Okay, now to the algebra. As we saw before, we can decompose any bivector $B$ into components along axis-aligned basis bivectors: $$ B = p \, {\bf e_{yz}} + q \, {\bf e_{zx}} + r \, {\bf e_{xy}} $$ To apply our scaling $M$ to the bivector, we just need to see how $M$ affects the basis bivectors. This can be done by factoring them into their component basis vectors and applying $M$ to those: $$ \begin{aligned} {\bf e_{yz}} = {\bf e_y} \wedge {\bf e_z} \quad &\mapsto \quad (M{\bf e_y}) \wedge (M{\bf e_z}) = {\bf e_y} \wedge {\bf e_z} = {\bf e_{yz}} \\ {\bf e_{zx}} = {\bf e_z} \wedge {\bf e_x} \quad &\mapsto \quad (M{\bf e_z}) \wedge (M{\bf e_x}) = {\bf e_z} \wedge 3{\bf e_x} = 3{\bf e_{zx}} \\ {\bf e_{xy}} = {\bf e_x} \wedge {\bf e_y} \quad &\mapsto \quad (M{\bf e_x}) \wedge (M{\bf e_y}) = 3{\bf e_x} \wedge {\bf e_y} = 3{\bf e_{xy}} \end{aligned} $$ This matches the geometric intuition: $\bf e_{yz}$ didn’t change at all, while $\bf e_{zx}$ and $\bf e_{xy}$ both picked up a factor of 3 because their planes include the $x$ axis.

So, the overall effect of applying $M$ to the bivector $B$ is: $$ B \mapsto p \, {\bf e_{yz}} + 3q \, {\bf e_{zx}} + 3r \, {\bf e_{xy}} $$ Now, just as we would for a vector, we can also write out the transformation of $B$ as components acted on by a matrix: $$ \begin{bmatrix} p \\ q \\ r \end{bmatrix} \mapsto \begin{bmatrix} 1 & 0 & 0 \\ 0 & 3 & 0 \\ 0 & 0 & 3 \end{bmatrix} \begin{bmatrix} p \\ q \\ r \end{bmatrix} = \begin{bmatrix} p \\ 3q \\ 3r \end{bmatrix} $$ This is the same transformation we just derived, only written a different notation. But notice something here: the matrix appearing in this equation is not the same matrix $M$ used to transform vectors.

Apropos of nothing, I’m just going to mention that the inverse transpose of $M$ is proportional to the matrix above: $$ M^{-T} = \begin{bmatrix} \tfrac{1}{3} & 0 & 0 \\ 0 & 1 & 0 \\ 0 & 0 & 1 \end{bmatrix} $$ HMM. 🤔🤔🤔

The Cofactor Matrix

In fact, the matrix we need for transforming bivectors is the cofactor matrix of $M$.

This is proportional to the inverse transpose by a factor of $\det M$. (The inverse of $M$ can be calculated as the transpose of its cofactor matrix divided by $\det M$.) Actually, the cofactor matrix is defined even when $M$ is noninvertible—a nice property, since we can transform vectors using a noninvertible matrix, and we should be able to do the same to bivectors!

Let’s take a closer look at why the cofactor matrix is the right thing. First of all, what even is a “cofactor” here?

Each element of an $n \times n$ square matrix has a corresponding cofactor. The recipe for calculating the cofactor of the element at row $i$, column $j$ is as follows:

- Start with the original $n \times n$ matrix, and delete the $i$th row and the $j$th column. This reduces it to an $(n - 1) \times (n - 1)$ submatrix of all the remaining elements.

- Calculate the determinant of this submatrix.

- Multiply the determinant by $(-1)^{i+j}$, i.e. flip the sign if $i + j$ is odd. That’s the cofactor!

Then, the cofactor matrix is just sticking all the cofactors into a new $n \times n$ matrix.

So how is it that this construction works to transform a bivector? Let’s look at the bivector’s first basis component: $p \, {\bf e_{yz}}$. This term represents an area component in the $yz$ plane; as such, it only cares what the transformation $M$ does to the $y$ and $z$ axes. Well, the recipe for the 1,1 cofactor of $M$ instructs us to extract the 2×2 submatrix that specifies what $M$ does to the $y$ and $z$ axes. Then we take its determinant, which is nothing but the factor by which area in the $yz$ plane gets scaled!

Because of the way we chose our bivector basis—${\bf e_{yz}}, {\bf e_{zx}}, {\bf e_{xy}}$ in that order—each element of the cofactor matrix automatically calculates a determinant that tells how $M$ scales area in the appropriate plane. Or, for the off-diagonal elements, how $M$ maps area from one axis plane to another. In other words, the cofactors work out to be exactly the coefficients needed to transform the axis components of a bivector.

(The sign factor in step 3 above, by the way, serves to fix up some order issues. Namely, without the sign factor, we’d have $\bf e_{xz}$ instead of $\bf e_{zx}$. The latter is the preferred choice of basis element, for various reasons of convention.)

Incidentally, although we’re focusing on the 3D case here, I’ll quickly note that in $n$ dimensions, the cofactor matrix works to transform $(n-1)$-vectors (in the appropriate basis). In fact, to transform $k$-vectors in general, you would want a matrix of $(n-k)$th minors (determinants of submatrices with $n - k$ rows and columns deleted) of $M$.

Bivectors and Normals

At this point, I have to make a small confession. I’ve been hiding something up my sleeve for the past few pages. The trick is this: bivectors are isomorphic to normal vectors in 3D. In fact, the components $(p, q, r)$ of a bivector in our standard basis are exactly (up to normalization) the $(x, y, z)$ components of a normal to the bivector’s plane!

Let’s see how this comes about. We saw earlier that wedging a set of linearly dependent vectors together will give zero. This means that the plane of a bivector $B$ can be defined by the following equation: $$ B \wedge v = 0 $$ Any vector $v$ that lies in $B$’s plane will satisfy this equation, because it will form a linearly dependent set with two vectors “inside” $B$ (two vectors that span the plane). Or, to put it another way, the trivector spanned by $B$ and $v$ will have zero volume.

Suppose we expand this equation using our standard vector and bivector bases, and simplify: $$ \begin{gathered} (p \, {\bf e_{yz}} + q \, {\bf e_{zx}} + r \, {\bf e_{xy}}) \wedge (x \, {\bf e_x} + y \, {\bf e_y} + z \, {\bf e_z}) = 0 \\ (px \, {\bf e_{yzx}} + qy \, {\bf e_{zxy}} + rz \, {\bf e_{xyz}}) = 0 \\ (px + qy + rz) {\bf e_{xyz}} = 0 \\ px + qy + rz = 0 \\ \end{gathered} $$ Let me annotate this a bit in case the steps weren’t clear. In the second line I’ve distributed the wedge product out over all the basis terms; most of the terms fall out because they have two copies of the same axis wedged in (for example, ${\bf e_{yz}} \wedge {\bf e_y} = 0$). In the third line, I reordered the axes in all the trivectors to $\bf e_{xyz}$, which we can do as long as we keep track of the sign flips—and here, they all have an even number of sign flips. Finally, I factored $\bf e_{xyz}$ out of the whole thing and discarded it.

Now, the final line looks just like a dot product between vectors $(p, q, r)$ and $(x, y, z)$! Or in other words, it looks like the usual plane equation $n \cdot v = 0$, with normal vector $n = (p, q, r)$.

This shows that the bivector coordinates $p, q, r$ with respect to the basis ${\bf e_{yz}}, {\bf e_{zx}}, {\bf e_{xy}}$ are also the coordinates of a normal to the plane, in the standard vector basis ${\bf e_x}, {\bf e_y}, {\bf e_z}$; moreover, the operations of wedging with a bivector and dotting with its corresponding normal are identical. Formally, this is an application of Hodge duality, which (in 3D) interchanges bivectors and their normals—but more on that in a future article.

Further Questions

We’ve seen that normal vectors in 3D can be thought of as Grassmann bivectors, at least to an extent. We’ve also seen geometrically why the cofactor matrix is the right thing to use to transform a bivector. This provides a somewhat more satisfying answer than “the algebra works out that way” to our original question of why some transformations make a distinction between ordinary vectors and normal vectors.

However, there’s still a few remaining issues that I’ve glossed over. I said that bivectors are “isomorphic” to normal vectors, meaning there’s a one-to-one relationship between them—but what’s that relationship, exactly? Related, why did we end up with the cofactor matrix instead of the inverse transpose? They’re proportional to each other, and one could make a case that it doesn’t really matter in practice which you use, as we usually don’t care about the magnitudes of normal vectors (we typically normalize them anyway). But we (or, well, I) would still like to understand the origin of this discrepancy.

Another question: in our “apéritif” at the top of this article, we encountered units with both positive and negative scaling powers, ranging from −3 to +3. We’ve now seen that Grassmann $k$-vectors have scaling powers of $k$, from 0 to 3. But what about vectorial quantities with negative scaling powers? Do those exist, and if so, what are they?

In the next part of this series, we’ll dig deeper into this and complicate our geometric story still further. 🤓