Rotations And Infinitesimal Generators

September 18, 2011 · Math · 6 Comments

In this post I’d like to talk about rotations in three-dimensional space. As you can imagine, rotations are pretty important transformations! They commonly show up in physics as an example of a symmetry transformation, and they have practical applications in fields like computer graphics.

Like any linear transformation, rotations can be represented by matrices, and this provides the best representation for actually computing with vectors, transformations, and suchlike. Various formulas for rotation matrices are well-known and can be found in untold books, papers, and websites, but how do you actually derive these formulas?

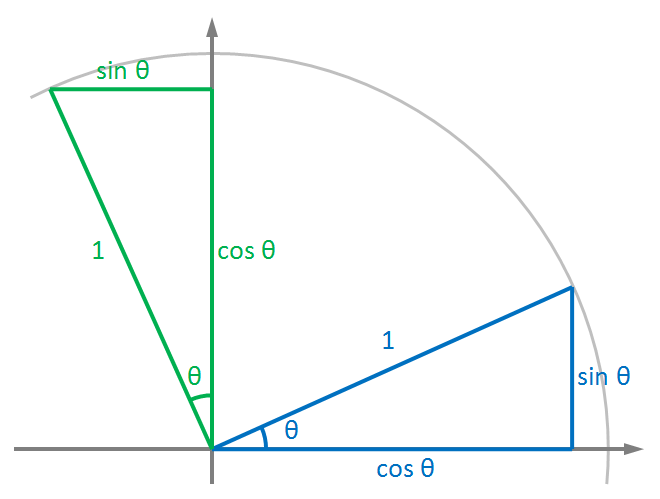

If you know a little trigonometry, you can work out the 2D rotation matrix formula by drawing a diagram like this:

The rotation takes the vector to and the vector to . This is just what we need, since in a matrix the first column is just the output when you put in a unit vector along the -axis; the second column is the output for a unit vector along the -axis, and so on. So the 2D rotation matrix is: You can expand this 2×2 matrix into a 3×3 one and rearrange a few things to get rotations around the , , and axes. That’s all well and good, but what if you want a rotation around some other axis, one that’s not lined up with , , or ? Trying to figure out that formula directly with trigonometry is probably hopeless!

One way to do it is to combine rotations together to achieve the desired result. We could do something like: work out the polar coordinates of the axis we want to rotate around, then apply some rotations around the and axes that take that axis onto the -axis. Then do an -rotation by your desired angle, and then revert our and rotations to get the axis back to where it was.

That will work, but it’s inelegant. Since this is a blog about theoretical stuff, I’m going to talk about a more elegant way to do it, using a thing called an infinitesimal generator!

The basic idea of this is to first work out the formula for an infinitesimal rotation - a rotation by a very small angle. What’s the advantage of this? Everything’s simpler in infinitesimals, because you’re allowed to simply ignore things that are “too small to matter”. Infinitesimal math lets you make fantastically crude approximations and not worry about it because eventually, you’re going to take the limit as the infinitesimal thing goes to zero, and in that limit your approximation becomes exact! In this case (and I’m giving away a bit of the story here), once we know the formula for an infinitesimal rotation, we’ll integrate it to get the formula for a finite rotation.

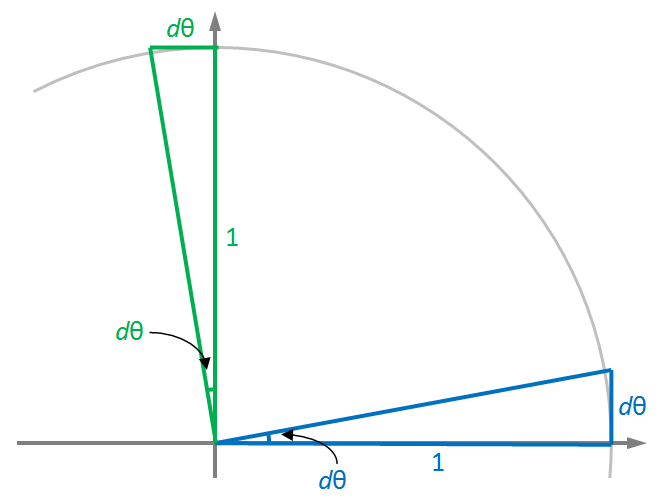

Let’s return to the diagram above, but now consider a rotation by an infinitesimal angle . Imagine taking the Cartesian coordinate system and just barely starting to turn it counter-clockwise. What happens to the point ? It goes straight up! Eventually, if you keep rotating, it’ll start to curve over to the left…but since this is just an infinitesimal rotation, it doesn’t have time to do that.

Surely, you say, it doesn’t go straight up? It must go a little to the left, mustn’t it? Yes, it does…but this is one of those “too small to matter” things that you can just ignore! The smaller is, the closer to straight-up the point goes, and that justifies treating it as if it just goes straight up all the time.

An analogous thought process about the point reveals that it goes straight to the left, neither up nor down (within our approximation). Here’s the diagram again, modified with these observations:

As before, the effect of the rotation on these two points is all we need to get the matrix for an infinitesimal rotation: (I’m using lowercase letters, e.g. for infinitesimal transformations, and uppercase letters like for finite ones.) Anyway, this looks a lot simpler than the first matrix we found, and we didn’t need any trigonometry to work it out!

Conceptually, what we need to do next is accumulate the effect of many successive infinitesimal rotations, to get a finite rotation. Technically, this is going to be accomplished by integration. But before we can integrate anything, we need a differential equation, so we have to fiddle with the formula a bit to get it into that shape. Let’s start with a vector and apply the infinitesimal rotation to it; that’ll cause to change by some small vector : (In the second line there, is the identity matrix.) Now we have a differential equation that tells us how changes with for very small values of . The matrix in the last line, which I’m naming , is the “infinitesimal generator” of 2D rotation! It’s a matrix that converts a vector into its derivative with respect to infinitesimal rotations.

The Exponential Map

At this point, we need to take a short detour into the world of differential equations. We have a differential equation in which the derivative of a vector, , is equal to a matrix multiplied by itself. This looks very similar to a famous differential equation about real numbers: whose solution is the exponential function, (where is a constant of integration, which equals ). Working purely by analogy, we might hypothesize that the solution to our original differential equation is: Now, instead of the ordinary exponential function on real numbers, we have some kind of exponential of a matrix, where e “raised to the power of” a matrix gives us back another matrix. Does this even make sense? It turns out that it does! This “matrix exponential” is a mathematical entity called the exponential map, a generalization of the exponential function to a broad range of contexts. It obeys many of the same rules as the familiar exponential function, so we can prove that it really does solve our differential equation!

Now we just need to calculate it. One way of defining the ordinary exponential function is by its Taylor series: The same Taylor series can be straightforwardly translated to one for the exponential map on matrices! This implies that: We’ve made some progress. Instead of a differential equation, we now have an infinite series for explicitly calculating a rotation matrix for any angle! We can almost split this into the four individual matrix elements and sum up each series individually - but first we need to see what the integer powers of look like.

Let’s just calculate the first few powers and see what happens: Woah! That’s interesting - the fifth power of is the same as itself. This means that if we kept going, we’d just generate the same four matrices over and over again. Note also that , and . Not only do the matrices repeat every four powers, they repeat with a sign change every two powers.

This is very similar to the behavior of a couple of other famous Taylor series! The Taylor series of the sine and cosine functions are the same as that of the exponential function…except that they only include every two powers, and alternate with a sign change each term, with sine taking the odd powers and cosine taking the even powers. (This is the basis of Euler’s formula.)

If we regroup the terms in our series for into odd and even, and use the fact that the powers of repeat with sign changes, we get: This final matrix is the same as in the very first equation in this article, but now we’ve gotten there through a completely different route - we first worked out the generator matrix for an infinitesimal rotation, then we calculated the exponential of that matrix (multiplied by ) to get the matrix for a finite rotation!

Axis-Angle Rotations in 3D

We can apply the same approach to solve the problem I posed near the top of this article: to find the matrix of a rotation about an arbitrary axis. Let’s identify this axis by , a unit vector pointing along the axis. Our goal is to find an expression for , the 3×3 matrix that rotates around by an angle . As before, we’ll begin by considering an infinitesimal rotation, and working out the generator .

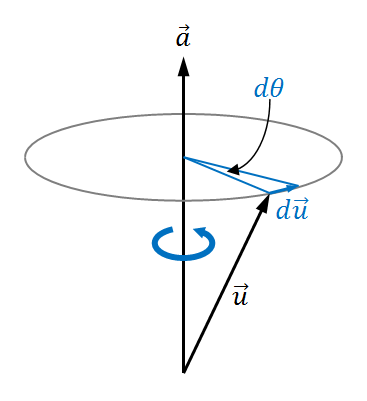

Let’s consider the action of a rotation around by an infinitesimal angle on an arbitrary 3D vector . What will happen to ? In the infinitesimal approximation, it moves in a direction straight out of the plane containing and , as shown here:

We can express this using the vector cross product: (Note that the length of the cross product vector is related to the lengths of the two vectors being crossed. I leave it as an exercise for the reader to show that the length of is correct in the above equation!)

The cross product can also be expressed as a matrix. In this case, we need a matrix that, when multiplied by , gives us . This will be , the infinitesimal generator for rotations around . It follows that , i.e. the rotation matrix we’re trying to find is the exponential of times the matrix above! If you work out the first few powers of and use the fact that is a unit vector (so ), you’ll see that it follows the same pattern that the powers of did: they repeat every four powers, and repeat with sign alternation every two, so that and . We can almost use the same odd/even term grouping as before to split the series into a part proportional to , and a part proportional to . However, there is one little bug to deal with. In the 2D version of this derivation, we implicitly used the fact that , the identity matrix, when factoring out of the even terms. Here, , so we have to do a little bit of a fixup. Finally, we can put everything together to get our final rotation matrix: And there it is! Whew! That was a lot of work, but now we have an explicit matrix for arbitrary rotations around any axis in 3D space, and along the way we met a bunch of interesting concepts, such as infinitesimal math, the exponential map, and Taylor series.

Rotations are truly a fascinating set of transformations, and in this post I’ve only just scratched the surface of the interesting structure that they have. Rotations in any number of dimensions form a mathematical entity called a Lie group, and the fact that they can be produced by infinitesimal generators is intimately linked with the special properties of Lie groups! But that’s a topic for a future post.